Here are my notes from Alyssa Henry’s Keynote on Amazon Web Services. Alyssa is the GM of Amazon’s S3. Not much editing and no slides – yet – to link. Update: slides are here. Alert readers Tim and Justin found the link. Thanks, guys. Update 2: I’ve added a couple of her slides to the post. End updates.

The quotes are hers. I didn’t catch them all.

Goals

- Durable

- 99.99 availability

- Scalability

- Scalability – virtually infinite

- Support an unlimited number of web scale apps

- Use scale as an advantage – linear scalability

- Vendors weren’t interested in engineering for the 1% – want to engineer for the 80%

- Secure

- Fast

- Simple

- Straightforward APIs

- Few concepts to learn

- AWS handles partitioning – not customers

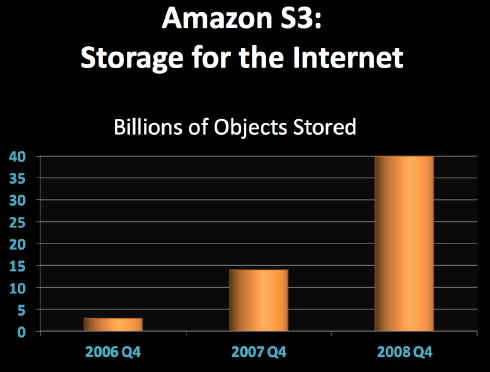

- Some customers have billions of objects

- Cost-effective

The big issue

Uncertainty to a constant at AWS. They don’t know

- Predominant workload type

- Usage consistent or changing

- Object access frequency – volume – locality – lifetime – size

- Use cases varied and may change – suddenly – or over time

- Must embrace uncertainty

This isn’t the standard data center mindset.

“Everything is vague to a degree you do not realize till you have tried to make it precise.â€

-Bertrand Russell

Security

Amazon stores millions of credit card numbers – a criminal bonanza and a commercial disaster if breached. Secure protocols, authentication mechanisms, access controls log-able.

Forensic drive wipes. Not transparent about where their data centers are located

Need for speed

You’ve heard numbers about how quickly people start leaving as response times rise. So has Amazon.

S3 latency insignificant relative to Internet latency. They reduce Internet latency by adding new locations. They also perform multiple low-latency retries before returning a high-latency error to customers.

Simple

Simple self-service with a straightforward API. Few concepts to learn, limited command sets.

For storage they handle partitioning, not customers. Just throw it in the bucket. And some customers have billions of objects.

Cost effective

Pay as you go. Pay only for what is used. No long term contracts or commitments.

Amazon uses their own software and massive scale on commodity boxes to reduce costs.

Failure happens

“Try again. Fail again. Fail better.” -Samuel Beckett

What are the odds? Many failures happen frequently – but even low probability events happen at high scale.

- Natural disasters

- Load balancers corrupt packets

- Techs pull live fiber

- Routers black hole traffic

- Power and cooling failure

- NICS corrupt packets

- Disk drives fail

- Bits rot

Failure types

Scope: small to large

Duration: temp or permanent

Effect: harmless to catastrophic

Techniques

“Do not let what you cannot do interfere with what you can do.”

John Wooden

Amazon’s basic techniques for dealing with the depressing litany of tech failure includes:

-Redundancy

Department of redundancy department.

A broadly applicable technique that increases durability, availability, cost, and complexity. Interesting analogy: seat belt & air bag vs belt & suspenders.

The focus is on avoiding catastrophe (seat belt/air bag) rather than over-engineering to avoid inconvenience (belt/suspenders).

Plan for the catastrophic loss of entire data center: redundantly store data in different data centers. Expensive, but once you’ve done it smaller features become belt & suspenders kinds of features: costly, inconvenient and they don’t solve big problems. [Ed. note: like RAID.]

-Retry

Resolves temporal failures: real time or later – quicker for AWS to retry than for customers to do it. Leverage redundancy – retry from different copies.

-Idempotency

An idempotent action is one whose result doesn’t change if the action is repeated. Taking a number’s absolute value or reading a customer record are actions that can be repeated without changing the result. If an idempotent action is taking too long, run it again. Lather, rinse, repeat.

-Surge protection

Rate limiting is a bad idea – build the infrastructure to handle uncertainty. Don’t burden already stressed components with retries. Don’t let a few customers bring down the system.

Surge management techniques include exponential back off (shades of CSMA/CD!) and caching TTL (time to live) extensions to avoid waiting for renewals.

-Eventual consistency

Spectrum of choices. Time lapse typically result of node failure.

Amazon sacrifices some consistency for availability. And sacrifices some availability for durability. A matter of priorities.

For example, objects are written to multiple data centers. Within each data center there are multiple pointers to the objects in case a pointer gets corrupted. Pointers are cheap; retrieving an object from another data center isn’t.

-Routine failure

Component failure is normal. Everything fails. Therefore don’t have unused/rarely used code paths since they are most likely to be buggy.

Amazon routinely fails disks, servers, data centers. For data center maintenance they just turn the data center off to exercise the recovery system.

-Diversity

Monocultures are risky. For software there is version diversity: they engineer systems so different versions are compatible.

They also maintain diversity within commodity hardware. Had one case where all the drives from one vendor failed. Another case where a storage server had a firmware bug: a failed drive would nuke the server. Another shipment of servers had faulty power cords. Correlated failures happen – especially at scale.

Diversity of workloads: customer workloads can be interleaved. Amazon is not a monoculture

-Integrity checking

Identify corruption inbound, outbound, at rest. Interesting failure mode: NICs that corrupt data after computing the check sum. Store checksums and compare at read – plus scan all the data at rest as a background task.

Application level check-summing: increases cost, complexity for the customer. Increases durability, availability.

-Telemetry

Internal, external. Real time, historical. Per host, aggregate. Strong telemetry platform.

-Autopilot

Human processes fail. Human reaction time is slow. Want system to be on autopilot and just run. If a human screws up an Amazon system, it isn’t the human’s fault. It’s the system.

Final thoughts

Storage is a lasting relationship that requires trust.

Reliability at low cost achieved through engineering, experience and scale.

The StorageMojo take

Amazon is the world leader in scale out system engineering. Google may have led the way, but the necessity to count money and ship products set a higher bar for Amazon. Plus they made every mistake in the book getting to where they are today. Hard-won learning.

Amazon Web Services is a logical extension of Amazon’s massive infrastructure investment. It may very well dwarf their products business in a few short years. I’d like to see them open the kimono more in the future.

Courteous comments welcome, of course. In a later conversation with Alyssa I noted that Google seems to have a problem scaling their clusters beyond 7-8 thousand nodes and asked where Amazon’s clusters topped out. She said they hadn’t seen that problem yet. Hmm-m-m. . . .

Extremely interesting stuff – and what it says very strongly is that you need an application architecture which can exploit (and withstand) infrastructure “glitches”. However, very many businesses don’t have the scale and the engineering ability to do this – often their systems are integrations of “best of breed” and legacy applications which don’t easily deal with issues such as geographic separation, latency, inconsistent data and so on.

For many companies, the mantra used ot be to buy and not build applications. Now it might be that a really advanced service provider could extend the SaaS model to provide easy self-build of WEB-based application components on a common infrastructure to allow more modest sized organisations to utilise these sort of platforms. However, I still think that’s a major challenge for many companies.

It would also appear that many of the other massively-scalable e-businesses on the Internet have similar general approaches, some of whom have been more successful than others (take something like YouTube and they clearly have big issues of keeping data in step in a timely manner, but maybe that doesn’t matter for their services – if eBay goes down, tens of thousands of traders lose business; if there is a YouTube glitch, it;’s just a minor annoyance).

Two things jumped out:

1. More sites to deal with Internet latency. They can’t speed things up with more or faster storage in a data center, so they build more data centers. The power of having a big enough plan!

2. Seat belts and air bags vs. belts and suspenders. Great analogy! Can you expand on this part of her presentation?

Thanks for sharing, very interesting topic and speaker.

Hi — James Hamilton posted the slides here:

http://perspectives.mvdirona.com/2009/02/25/FAST2009KeynoteAWSS3.aspx

james hamilton has posted the slides:

http://perspectives.mvdirona.com/2009/02/25/FAST2009KeynoteAWSS3.aspx

“In a later conversation with Alyssa I noted that Google seems to have a problem scaling their clusters beyond 7-8 thousand nodes and asked where Amazon’s clusters topped out. She said they hadn’t seen that problem yet. Hmm-m-m. . . .”

Look at Google now and think back to Google in 1999. What’s changed? I’d say not much. The reason they’ve topped out is that they’ve stopped innovating. The worst thing happened to them, they won.

If they had evolved search beyond firing some keywords into a text box they’d need another scale jump and maybe even a rethink of their architecture, but they’re not so what they have is good enough. Their innovation cycle is over and their biggest initiatives are a web browser (So 1990s) and a mobile phone operating system (So early 2000s)

Great notes Robin – makes me wish I had went. I’ll have to read your blog more frequently again. Raises the question, at what point does it make sense for the average enterprise to look at ‘internet-scale’ tactics?

Don, I’ve been noodling around that very problem for a while. The CapEx advantages don’t seem large enough to justify it – sure, scale out clusters are cheaper, but it would take a lot for the average enterprise to notice due to legacy overhead – but the management advantages are another story.

If the enterprise has a large volume of not-very-hot data that they want online – think media, health, geophysical – then the management advantages make sense. More later.

Robin