Cumulative distribution functions

The paper describes three large-scale Google workloads:

- Websearch: high request throughput and large data processing requirements for each request – IIRC 70-100 MB of data searched per request – with variable demand by time of day.

- Gmail: A more disk-intensive service whose machines tend to be configured with more disk drives, with each request accessing a relatively small number of servers. Also correlated with TOD.

- Mapreduce: large, offline batch jobs, running on hundreds or thousands of machines involving terabytes of data.

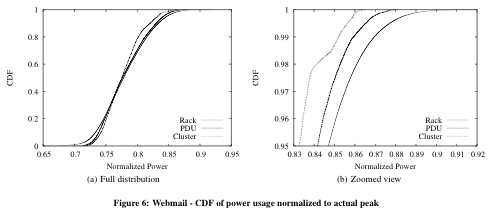

They picked a sample of 5,000 servers running each of the workloads. Since they want to limit power usage peaks and valleys, they took a close look at power usage at the limit.

Websearch

The full distribution diagram shows that rack power usage starts at 45% of peak load and rises to 98% when, presumably, all the machines in the rack are operating near peak power. Most of the time the rack is in the 60-80% range.

Portfolio effect

The other curves show the “PDU” (power distribution unit) curve of about 20 racks or 800 machines, while the “cluster” curve is the CDF for about 5,000 machines. The curves display the smoothing effect of large numbers so the entire cluster never gets above 93%.

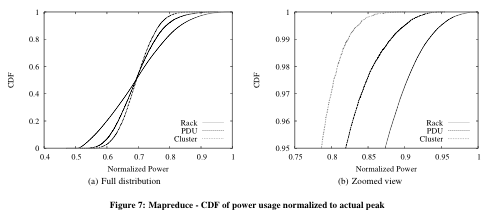

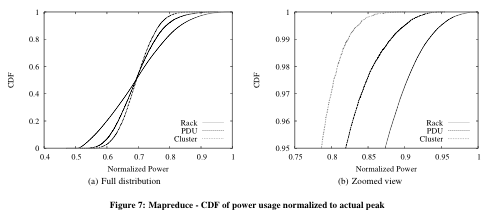

Here are the figures for Gmail and Mapreduce:

Google’s own commercial data center?

Google could have stopped with their own highly tuned applications. Instead the included an unnamed data center whose workloads are lower because they are

. . . in development, or simply not highly loaded. For example, machines can be assigned to a service that is not yet fully deployed, or might be in various stages of being repaired or upgraded, etc. Figure 9 shows the power CDF for one such datacenter. We note the same trends as seen in the workload mix, only much more pronounced. Overall dynamic range is very narrow (52 – 72%) and the highest power consumption is only 72% of actual peak power. Using this number to guide deployment would present the opportunity to host a sizable 39% more machines at this datacenter.

Is this a Google datacenter for the company’s internal operations, as posited in So what does Google use when they aren’t Googling?

Power save

The point of this study was to evaluate power-saving techniques once power usage was understood. Google evaluated two power saving techniques.

CPU voltage/frequency scaling has been implemented on some AMD and Intel chips. When the processor is less busy it reduces its input voltage and clock frequency to save power.

Modeling CPU v/f scaling under data center loads, Google found that datacenters could see power savings of 15-25%, depending on how aggressively it was used. They also found that I/O bound servers benefitted less than compute-intensive workloads.

Non-peak power efficiency

Another option is to improve non-peak power efficiency. Most “efficiency per watt†metrics are based on peak loads, but Google found that systems spend little time at “peakâ€. Google found that idle systems power never dropped below 50% of peak load, while ideally an idle system’s consumption would drop to zero.

If idle power were only 10% of peak power, an enterprise data center could save 50% on its power. Even Google’s well-behaved apps would see savings in the 30%+ range.

The StorageMojo take

Google’s been pushing power savings for five years now and it is all to the good. The average data center has so many high-cost items that power costs don’t garner much attention. Google’s unique infrastructure makes power important to them. And since they buy a half million servers a year vendors care.

The important finding of this paper is that the big power savings come from optimizing non-peak power usage. This means optimizations at the motherboard and sub-system levels – like storage – that many companies can contribute to.

Google will continue to push the non-peak power efficiency issue hard, and that is good for all of us. For home and office users the savings could be substantial since typical office tasks rarely stress a system. While many question the validity of Google’s cluster-based architecture for data center use, their focus on power-saving will benefit us all.

Comments welcome, of course. Wes, this is your area of research interest. What is your perspective?

Robin,

Reducing idle power is worth doing, but it’s not my problem since I don’t design chips. 🙂 There may be more vendor emphasis on this topic once the SPECpower benchmark is released. There were two independent studies recently showing that peak power efficiency and non-peak efficiency are quite different for Intel and AMD processors, so Google isn’t the only one paying attention.

Saving power is nice because it reduces your power bill, but IMO the key point of the warehouse paper is power provisioning. If you put a 30A circuit into a rack but use only 20A of it, then you are wasting infrastructure. Watch for my papers on this topic.

Reducing idle power is not something particularly useful to us since our large 1000+ cpu clusters run at over 95% utilization.