This morning Woven Systems announced their new 10 Gbit Ethernet switch. I named Woven “coolest hardware” at last years Datacenter Ventures conference. Harry Quackenboss, their CEO, promised they’d have the switch working in six months. Well, here it is a mere seven months later, and they’ve done it. My hats off to the engineering team.

Now let’s get into Woven’s Mojo.

I’d rather switch than fight

The switch is unique is several respects:

- 10 Gigabit ethernet only

- Up to 144 non-blocking ports on a single switch

- Up to 4,000 non-blocking ports in a fabric of Woven switches

- Built from commodity parts – with one vital exception

- Low-cost

- The killer feature: active congestion management

- Uses standard ethernet protocols

What is it going to kill?

It shouldn’t be a surprise that fibre channel has some features that storage systems find really useful. After all, FC was developed as a storage interconnect. So it has bandwidth, flow control, low latency and rapid failover.

Gigabit ethernet lacks in all these areas: limited bandwidth; lost packets in congested networks; high IP latency; and failover that is too slow for storage drivers to manage.

It looks like Woven has solved 3 of the 4

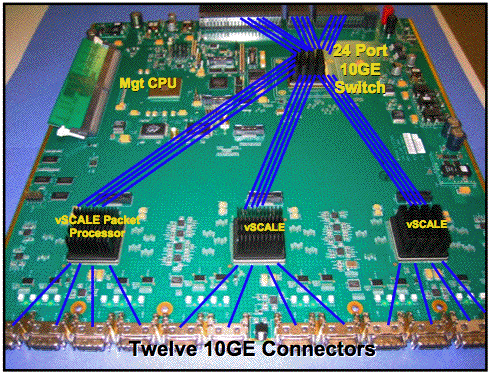

Woven’s secret sauce is built into an ASIC that sits in front of the commodity 24 port ethernet chip (picture helpfully provided by Woven).

The vScale Packet Processor – I don’t know what the “v” stands for – inserts low-overhead probe packets into the data stream, which the vPP at the other end of the stream, be it in the same switch or one across a fabric, bounces back, so the originating vPP has a real-time view of path latency. In milliseconds or less. It works across a fabric of up to 4,000 ports, ensuring that QoS even as the fabric grows.

That’s pretty cool, but the coolest thing is this:

When path latency is too high, the vPP has two tools it uses to manage the latency.

- It can change to a less congested data path in less than 10ms

- It can pause the HBA using a standard ethernet protocol

I know what you are thinking:

Wow, path failover in 10ms – drivers won’t even notice.

And

Pausing HBAs when congestion strikes is flow control for ethernet – a process FC handles with buffer credits.

All done using standard ethernet protocols, albeit creatively.

That bell you hear is tolling for Fibre Channel, which is about to meet its toughest competitor yet. Which may be why the FC over ethernet proposals are gathering steam in the T11 committee. Adding FC’s low latency protocol to a very fast and reliable 10 Gb switch adds real value and helps protect existing FC investment. Could be a nice win for all involved.

The StorageMojo take

I’m sure all the usual Internet Data Center suspects are lined up to beta Woven’s switch. Linking several hundred thousand servers via ethernet requires a lot of bandwidth, and 10GigE delivers. For the massive storage clusters it is an even bigger win: lost packets are still a pain even if the cluster can survive them.

If everything works as advertised, FC’s decline may be faster than forecast, at least among the large enterprise base that can use a switch of this size. Woven’s switch will be a shot in the arm for big clusters and the people who build them.

Update: I’d inadvertently left out the fact that you can cross-couple the switches to create a 4,000 port fabric so I’ve added it.

Update II: Harry, Woven’s CEO, helpfully added some budget pricing for all you folks with new fiscal years starting mid-year – like the Cisco tear-down guys – and I couldn’t just leave it buried in the comments.

Pricing will be finalized when general availability is announced (planned for Q3 2007), but a 144 10GE port configuration will be about $1500/10GE port, with fully-redundant fans, power supplies, and management cards.

Compare that to Cisco’s current $23k/port pricing and Riverstone’s very aggressive $10k/port pricing for full speed 10 Gb and the term “disruptive technology” just leaps to mind.

Comments welcome, of course. I spent six hours at NAB today and drove over 1,000 km, so moderation may be a bit sluggish today. Me too.

It would be interesting to compare the price and performance of the Woven and Quadrics switches.

Sounds great. So how much and when can I buy one?

interesting indeed – This will be hot year for switch gear!

I understand that an individual port can run at 10Gb, but what is the aggregate bandwidth for all 12 ports on the above board? Can they all run 10Gb sustained simultaneously?

On a line card, 12 ports can transmit and receive 10Gb simultaneously. Within a chassis, 144 ports can do so, for a switch fabric bandwith in the chassis of 2.88 terabits/sec (144*10GE*2) Using a two-stage “fat tree” fabric of 144 port chassis, 4000+ ports can transmit and receive simultaneously with full cross section bandwidth.

Let me know when you find out the price Tracy.

Nathan, this article said 750£ per port…

http://www.techworld.com/news/index.cfm?rss&newsid=8605

Pricing will be finalized when general availability is announced (planned for Q3 2007), but a 144 10GE port configuration will be about $1500/10GE port, with fully-redundant fans, power supplies, and management cards.

I have recently (re)joined Quadrics. I *do* have pricing for our 10GigE swicthes if folk are interested.

I know it is a year-on from the previous postings – but our price per port is a fraction 0f that quoted for Woven’s

But can it run SCUZZY!

Have you heard about Arastra.

The look pretty strong, especially since it is founded and funded by Sun’s co-founder Andy Bechtolsheim.

Arastra said it’s going with the pizza box approach for its first offering, as opposed to the more common modular switch, to target the top of the rack space — providing a more power and cost efficient means for data centers to connect their servers.

Under the hood, it sports Layer 2 and Layer 3 switching with throughput up to 960 Gb/s and 714 million packets per second with sub-microsecond latency.

The box has multi-chassis scaling of up to 4096 ports. It supports 10-Gbit SFP+ optics and copper cabling, including 10GBASE-SR, 10GBASE-LR, 10GBASE-LRM, 10GBASE-CR as well as Gbit E 1000BASE-SX, 1000BASE-LX and 1000BASE-T.

The 7100 series switches run the Arastra Extensible Operating System, based on Linux. The OS monitors and restarts processes automatically in response to failures.

The Arastra hardware comes in two models: the 7124S with 24 10G SFP+ ports and the 7148S with 48 10G SFP+ ports. Arastra said the switches have been in beta at several sites including government labs and financial institutions. Production units will be available in Q1 2008.

Arastra isn’t providing a price for the switch yet — although it says it’s estimating less than $400 per port