Flash is weirder than you know – so keep reading – and you’ll know

I think I already mentioned that the flash guys refer to “programming” flash when a storage guy like moi would say “write.”

But they have a point. Flash is really an EEPROM. More than a write-once medium, but not a true random access device, either.

Update: I asked Ted Wobber, one of the paper’s authors, to comment on this post. He generously did so, and I have incorporated his comments after Update: flags. I thank Ted for his comments and StorageMojo readers for their understanding and support.

Let me count the ways

- Flash drives can only write zeros. Every write must be preceded by an erase because the only way to write a one is to erase first, which writes all ones. Every write means an erase followed by a write, which is slows performance.

- To write a page you must first erase the entire block. NAND flash, the most common kind, is divided into blocks – typically 128 KB – and each block is divided into pages – typically 2 KB. To write a new page, the entire 128 KB block must be copied first – less pages due for rewriting – and the entire block rewritten. Writes have a lot of latency. And small random writes? Don’t ask.

- There are no random writes in a block. Each block write starts with page 0 and proceeds in order to the 64th block. This is great for the blazing sequential write speeds that vendors happily quote, but it means that small random write performance is pretty awful.

- Block size is a tradeoff, not a given. As flash chip capacities grow, keeping block size constant means more blocks to manage. For example, if flash drives were divided into 512 byte blocks, a 64 GB flash drive’s block table would require 128 million entries and about 384 MB of storage. With a 128 KB block, the table size is a more manageable 524,352 entries and less than 2 MB of storage. Vendors have the opportunity to improve flash drive performance through smaller block sizes and better block management techniques.

- The most important piece of a flash drive is the translation layer. This software takes the underlying weirdness of flash and makes it look like a disk. The translation layer is unique to each vendor and none of them are public. Each makes assumptions that can throttle or help performance under certain workloads. What workloads? Hm-m-m-m.

What do you mean we, Kemo Sabe?

The last point deserves explication. Fortunately, some folks a lot smarter than me have done some work analyzing translation layer performance. Four amigos at Microsoft Research: Andrew Birrell, Michael Isard, Chuck Thacker, and Ted Wobber. In their paper A Design for High-Performance Flash Disks they spend some quality time analyzing a translation layer’s performance.

Short answer: random writes stink

What they found isn’t pretty. The flash translation layer, which takes NAND flash and makes it look like a block device to the USB mass storage protocol, creates performance potholes.

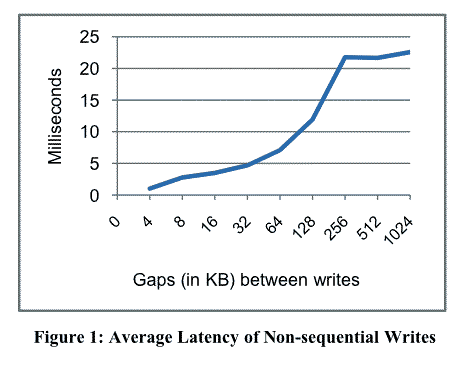

Random 4 KB writes incurred an average latency of 22 ms to completion. A blazing 190 KB per second. Hardly worse than a 1.8″ disk drive you say? There’s more.

The gap between writes affects write latency in a big way.

The paired-write problem

They found a couple of interesting things:

- Not all flash translation layers have the same performance. Smart engineers have figured out ways to mask some performance issues. The bad news: you don’t know which is which.

- Even costly Mil-spec flash drives, like the M-Systems FFD-25, suffer from slow random writes when multiple writes are distributed across the drive.

- If there is an easy way around the paired-write problem, the flash drive vendors haven’t found it yet.

The cost of paired writes

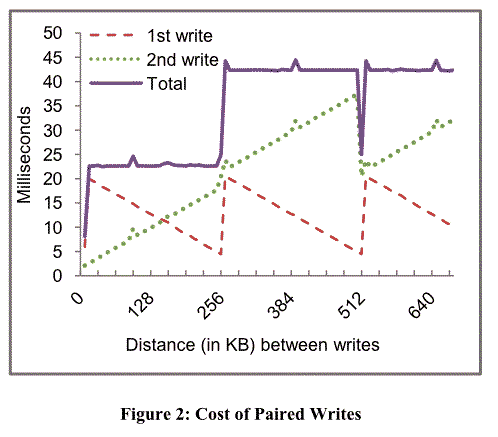

I really like this second figure from the Microsoft paper for demonstrating how the paired-write latencies add up:

Apparently this is the performance of the M-systems drive, but the paper doesn’t make that explicit. Update: this chart refers, like the first one, to a Lexar flash key. The M-Systems drive has very fast writes within a 16 MB range, while performance degrades over a wider range. The MR guys didn’t attempt a full forensic analysis on the M-Systems device. I regret my interpretation error.

Isn’t the paper “High-Performance Flash Disks”?

The bulk of the paper, which any serious flash-crazed storage geek should read, is their design for overcoming these issues. As they candidly admit at the end of this paragraph:

In this paper we describe data structures and algorithms that mitigate the problem of slow, non-sequential writes. One of the main areas of concern is that in a UFD, power can fail at any time (because the user unplugs the UFD). Furthermore, it is essential that device power-ups be fast since users are unlikely to tolerate a perceptible slowdown when inserting a flash device. Dealing with these two constraints led to a fairly complicated, but we believe still practical design described here.

Which suggests a couple of things to me. One, notebook flash drives with good write performance should be simpler to design since you have a battery and no sudden power failures. Almost ditto for the power-up problem.

These guys have been working on the PC-on-a-keychain problem too, so these simplifications probably weren’t interesting. But if I wanted to conquer the notebook flash drive market I’d be very interested. So guys, if you read this, please comment on how much simpler your design could be with the notebook assumptions.

Update: Ted Wobber, one of the authors, commented thus:

You are right that designing for laptops where there already is a battery and where the drive can’t easily be removed eliminates some constraints. However, many hardware designers might be hesitant to produce a storage component that depends on continuity of the external environment (e.g. power) for data stability.

My comment in response: I appreciate the concern about power assumptions. If I had to guess, I suspect the number of notebooks stolen exceeds the number of notebooks whose power suddenly ceases. I’m thinking a couple of seconds, max, while the flash drive stores its state – or whatever it needs to store, being a non-volatile storage device – that from a real-world, non-fastidious marketing guy perspective, the power-always assumption could be relaxed a bit without much danger to the very foundations of Western civilization. Which, depending on how you feel about Dick Cheney, may or may not be an acceptable risk.

Update: More comments from Ted:

The only thing I’d have a slight quibble about is the bit about vendors having a lot of flexibility with respect to block sizes. Although I’m not a hardware guy, I’d be about 99% sure of the following. NAND flash has a slow erase time. Memory is organized into large blocks in order to erase many pages at a time, and this is to achieve an economy of scale (parallelism). If you had to erase pages serially at 1+ ms per pop, digital cameras wouldn’t be happy since it would add .5 seconds per MB when storing an image (500 2k-pages x .001 s).

In fact, the current trend is to make blocks BIGGER as flash chips scale up. The latest SAMSUNG chips have 4K pages and 256K blocks.

As you know, our paper was about dealing with the physics of NAND flash (e.g. the big block sizes) without the random write penalty.

I have some thoughts about a couple of Ted’s points, which are competing with Ms. Mojo’s thoughts for my time. Sorry, Ted!

The StorageMojo take

The bottom line is that flash drive write performance will be all over the map as engineers try to optimize for a wide range of workloads. The software fix is to have file systems bunch writes, ala log-structured file systems. But that is a lot of work. It is easier to fix flash drive architecture and then build in volume.

Big sensor networks hoping to save on RAM-based SSDs by going to flash – keep hoping. I think it will be a while.

They guys at the hardware eval sites will have their work cut out for them figuring out flash drive performance. If a co-inventor of Ethernet and numerous other advances can’t figure it out, what hope can mere one-leg-at-a-time mortals have?

Comments welcome, as always. Moderation is a virtue, except in the defense of liberty.

Maybe a copy-on-write, allocate-on-flush filesystem that packs written data into contiguous 128KB blocks would be really fast on flash; I wonder where we could find such a thing.

Hmmm….If Apple would have such a filesystem, they could use it for laptops with flashmemory… I wonder if someone has such a thing for Apple to borrow….

Wes, Brainy,

Could ZFS do such a thing?

I’ve asked one of the ZFS team for a comment and I’m hoping maybe we’ll see one this weekend.

Robin

Robin, I thought it was clear 🙂

It is just a matter of time, that Apple will bring new Laptops with Flash Memory….ZFS could be the Filesystem of choice, as it transforms random writes to sequential writes…

Oh BTW, if you have missed it, there is a comparison between EXT3, NTFS and ZFS:

http://blogs.sun.com/Peerapong/entry/solaris_zfs_performance